Robots

Robots

About | CV | Projects

Robots I Have Worked With

uBot - 6

This is a dynamically balancing toddler-sized humanoid built at the Labortory of Perceptual Robotics, University of Massachusetts, Amherst. This robot is equipped with ATI Mini45 load cells on both hands. I am using the force-torque data from these sensors, while executing random trajectories to compensate for inaccuracies in the readings of these sensors due to gravitational and inertial loads. This will help to weed out false positives on whether the robot is interacting with an object, and help in building an efficient feedback mechanism for closed loop grasping controllers. Additionally, I am developing bimanual grasping controllers for this robot.

This is a dynamically balancing toddler-sized humanoid built at the Labortory of Perceptual Robotics, University of Massachusetts, Amherst. This robot is equipped with ATI Mini45 load cells on both hands. I am using the force-torque data from these sensors, while executing random trajectories to compensate for inaccuracies in the readings of these sensors due to gravitational and inertial loads. This will help to weed out false positives on whether the robot is interacting with an object, and help in building an efficient feedback mechanism for closed loop grasping controllers. Additionally, I am developing bimanual grasping controllers for this robot.

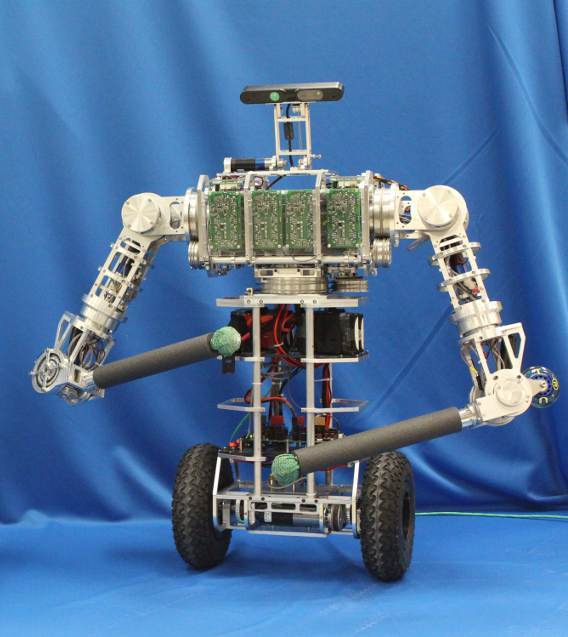

Siemens Humanoid

This is a 3-wheeled humanoid demo-platform developed at Siemens Corporate Technology, Munich, Germany. As a part of my summer internship at Siemens, in 2018, I developed a follow-me application of this robot, where the robot would use laser-scans of its environment to find the nearest human and follow him around on the pre-defined map of the room using the navigation stack of ROS. Additionally, I used Moveit to build a bimanual clock pick and place application using this robot.

This is a 3-wheeled humanoid demo-platform developed at Siemens Corporate Technology, Munich, Germany. As a part of my summer internship at Siemens, in 2018, I developed a follow-me application of this robot, where the robot would use laser-scans of its environment to find the nearest human and follow him around on the pre-defined map of the room using the navigation stack of ROS. Additionally, I used Moveit to build a bimanual clock pick and place application using this robot.

Firebird V

I worked on this mobile manipulator built by Nex Robotics as a part of the e-Yantra Robotics Competition, 2015 by IIT Bombay, India. The robot was equipped with multiple Long Range Infrared Sensors and my team built a robotic arm using servo motors to pick up given cargo blocks. The task was to traverse in a predefined grid, looking for cargo blocks and checking for the given correct orientation of those blocks. If the blocks were not sensed in the correct orientation, the robotic arm should pick the block up, turn it around and place it in the correct orientation. Additionally, it should traverse the path from its source to destination in the shortest time.

Robots I Have Built

Octocopter

This was a arial robot research platform built as a part of my undergraduate summer internship at The School of Vehicle Mechatronics, TU Dresden, Germany in 2016. In a short span of 3 months, I was able to successfully build an octocopter using the Pixhawk V4 flight controller. The drone had a flight time of about 20 min at the current weight and has a maximum payload capacity of 8kg. The drone was capable of taking off and landing autonomously from a surface and performed waypoint tracking using GPS. All the safety features provided by the Pixhawk V4 controller were enabled and tested. I also designed a suitable landing gear for the drone using Plexi Glass.

Surveillance Hovercraft

This was a prototype of a hovercraft that could perform environmental monitoring using Waspmote Gas Sensor Board and video surveillance using an onboard webcam and an Intel Galileo board, and transmit this data over X-Bee and Wi-Fi respectively. This robot could be of use in post-disaster surveillance, so it was awarded the Best Project Award at the Intel India Innovation Challenge, 2014.

This was a prototype of a hovercraft that could perform environmental monitoring using Waspmote Gas Sensor Board and video surveillance using an onboard webcam and an Intel Galileo board, and transmit this data over X-Bee and Wi-Fi respectively. This robot could be of use in post-disaster surveillance, so it was awarded the Best Project Award at the Intel India Innovation Challenge, 2014.